텔레그램으로 게임 개발 굴리기: OpenClaw로 SLIME ARENA 운영한 기록

OpenClaw를 처음 붙였을 때 궁금했던 건 단순했다. 책상 앞에서 잠깐 명령을 던지는 도구가 아니라, 자리를 비운 뒤에도 계속 일하는 개발자처럼 굴릴 수 있을까? 그래서 이 실험을 위해 저사양 미니 PC를 하나 샀고, 권한도 전부 열어 둔 채 텔레그램만으로 지시를 내리는 루프를 만들었다.

처음에는 일정 관리나 잡무부터 맡겨 봤다. 그런데 조금 굴려 보니, 정말 보고 싶은 건 이런 쪽이 아니었다. 내가 궁금했던 건 밖에서도 계속 개발이 굴러가는가, 더 정확히는 Telegram + OpenClaw + cron + GitHub issues/milestones 조합으로 실제 프로젝트를 운영할 수 있는가였다.

내가 원한 건 원격 데스크톱으로 IDE를 여는 방식이 아니라, 최소한의 인터페이스로 다음 일을 밀어 넣고 결과를 받는 흐름이었다.

가장 제대로 굴린 사례는 Godot 기반 2D 액션 게임 SLIME ARENA였다.

SLIME ARENA에서 어디까지 갔나

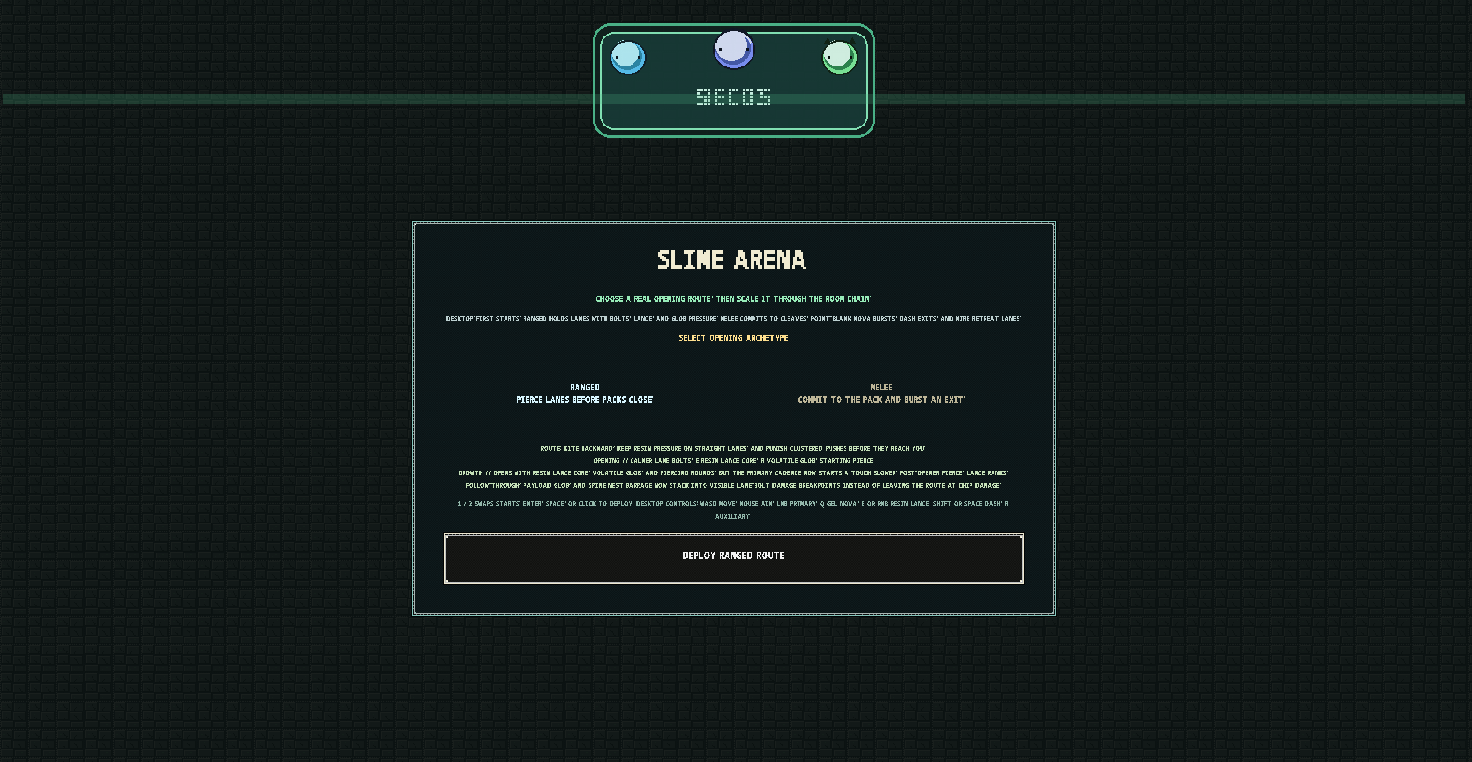

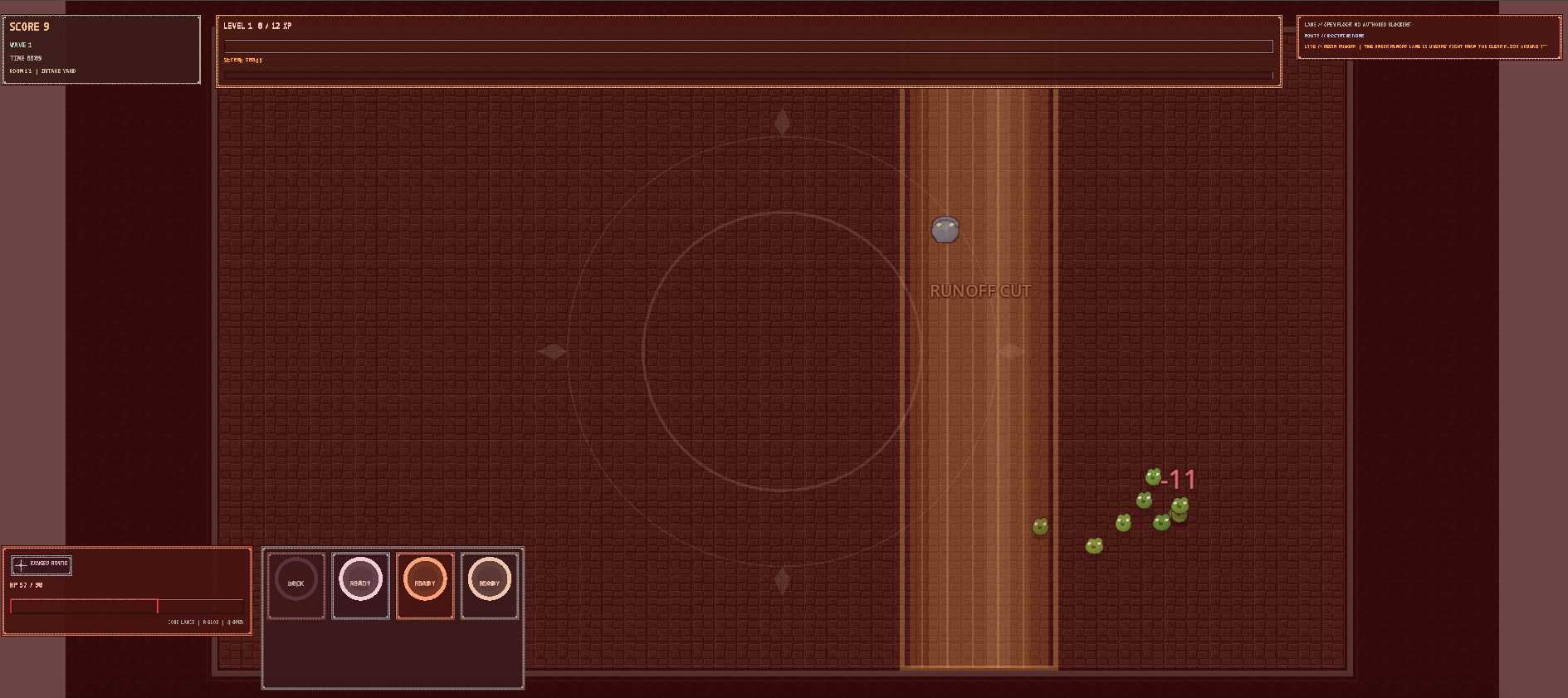

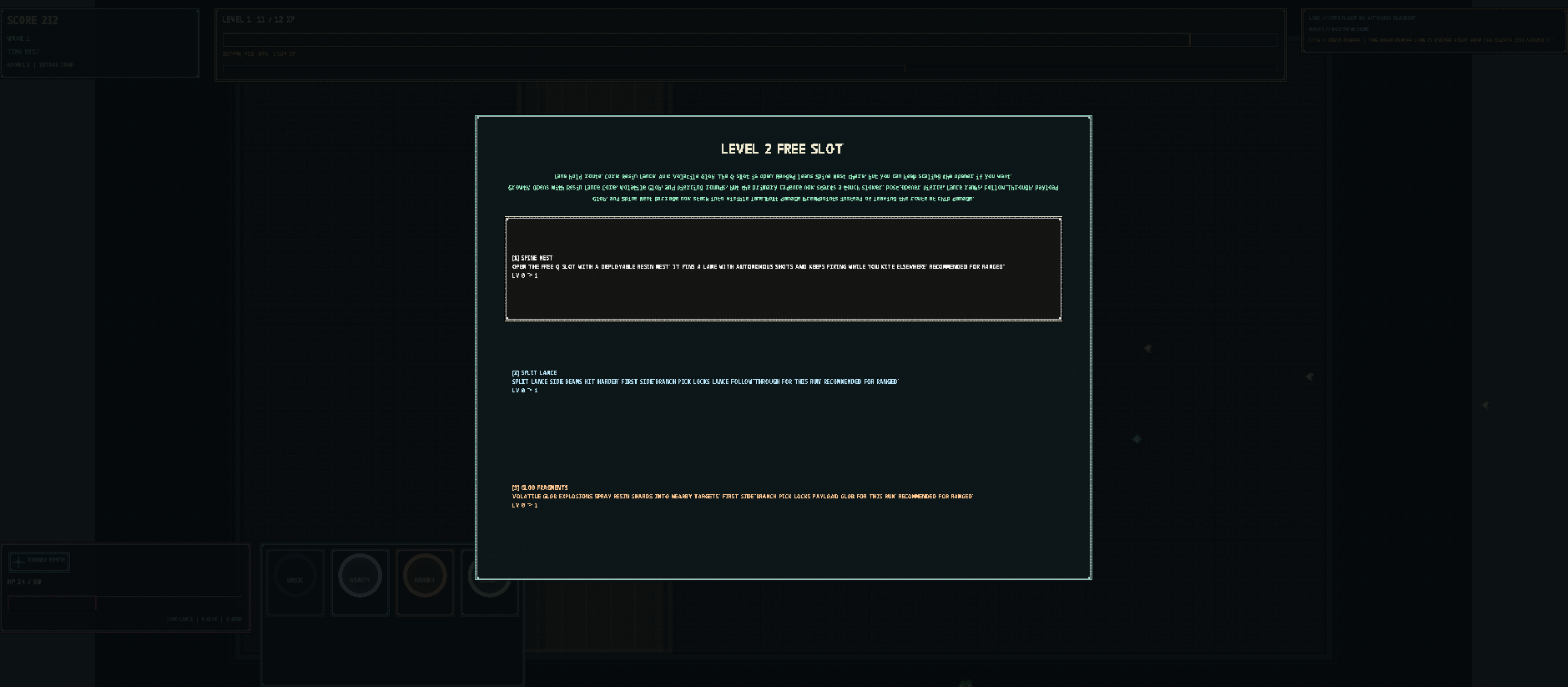

SLIME ARENA는 시작할 때 근거리와 원거리 두 타입 중 하나를 고르고, 웨이브를 버티며 레벨업할 때마다 스킬 트리를 찍는 구조다. 웨이브마다 몬스터를 다 잡으면 다음 단계로 넘어가고, 보스도 있다. 느낌으로는 2D 액션 디펜스와 뱀서라이크의 중간쯤에 있다.

지금은 리소스를 입히던 단계에서 멈춰 있다. 그래도 전투 루프 자체는 돌아간다. 난이도와 밸런스는 한동안 계속 만졌는데, 그 과정에서 오히려 일부가 틀어지기도 했다. 이 부분은 결국 사람이 다시 잡아야 하는 영역이라고 느꼈다.

처음에는 마크다운, 나중에는 GitHub

처음에는 2시간마다 마크다운 파일을 읽고 다음 작업을 진행하도록 시켰다. 그런데 이 방식은 내가 중간 상태를 파악하기가 너무 어려웠다. 어디까지 됐는지, 무엇이 막혔는지, 다음에 뭘 하려는지가 한눈에 안 들어왔다.

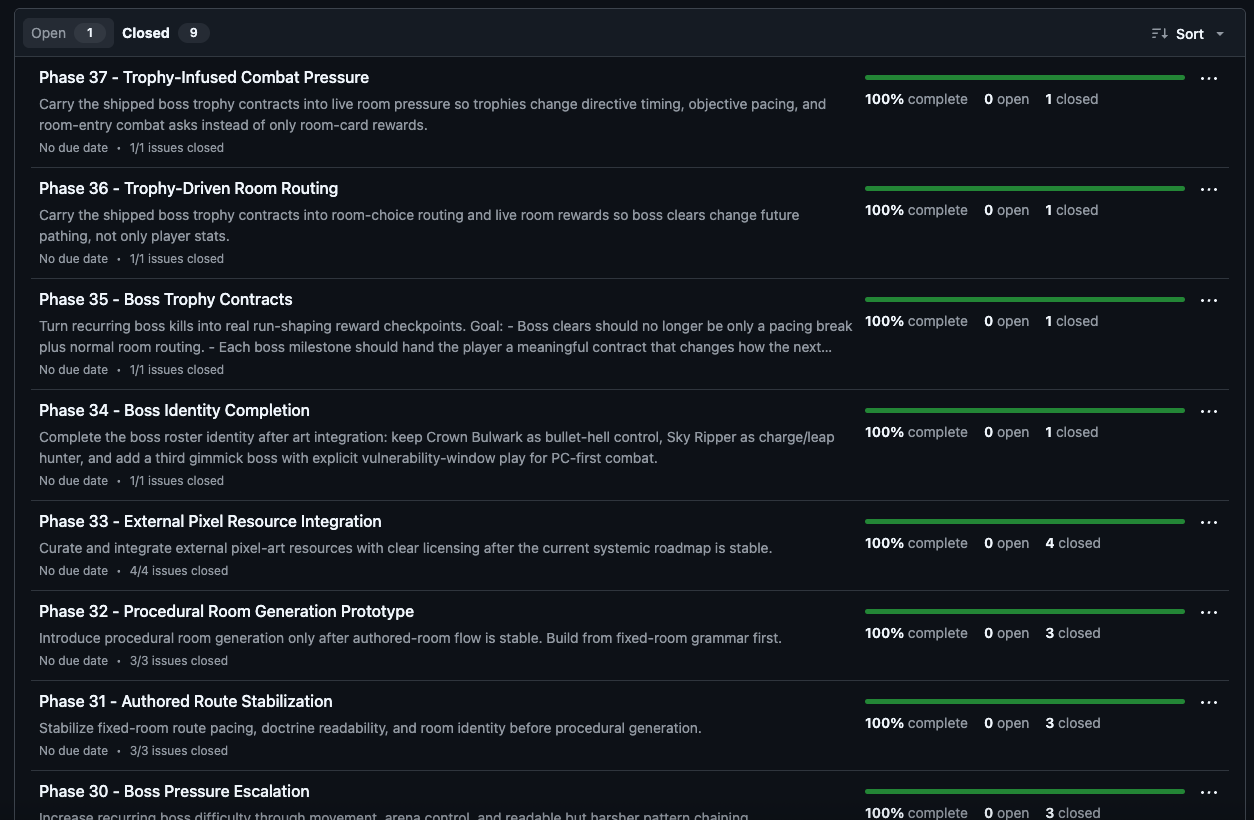

그래서 운영 방식을 바꿨다. OpenClaw용 GitHub 계정을 따로 만들고, 저장소에 개발자로 초대했다. 그다음부터는 milestone과 issues를 중심으로 루프를 굴렸다. OpenClaw가 먼저 마일스톤 목표를 세우고, 그 목표에 필요한 이슈를 만들고, 작업이 끝나면 닫고, 내가 테스트해 보다가 부족하면 다시 열어 내용을 보강시키는 식이다.

중요한 건 이 과정을 내가 IDE에서 직접 정리한 게 아니라 거의 전부 텔레그램으로 돌렸다는 점이다. 구현 지시, 수정 요청, 재오픈, 우선순위 변경까지 전부 텔레그램에서 했다. GitHub는 개발을 시키는 곳이라기보다 상태를 추적하고 검증 기준을 남기는 보드에 가까웠다.

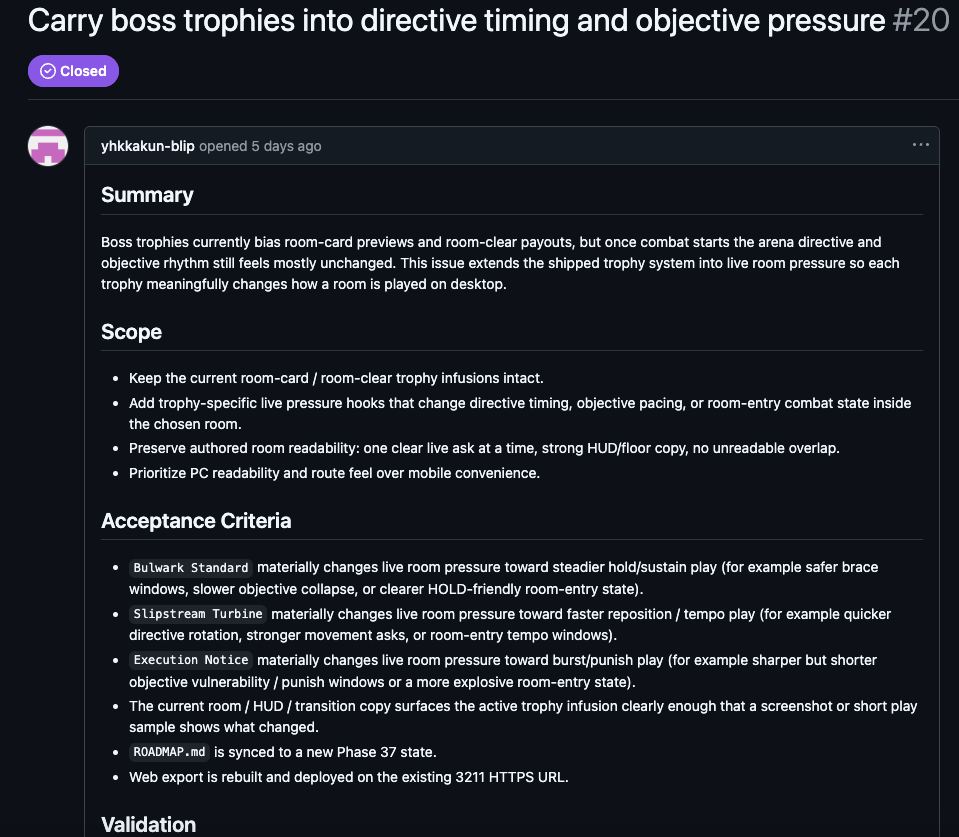

실제로 이슈 본문은 그냥 할 일 메모가 아니었다. summary, scope, acceptance criteria를 먼저 적게 했고, 작업이 끝난 뒤에는 어떤 커밋에서 처리했는지, 어떤 검증 명령을 돌렸는지, 재배포는 했는지까지 댓글로 남기게 했다. 마일스톤도 한두 개로 뭉뚱그리지 않고 Phase 단위로 계속 쌓였다. 내가 보고 싶었던 건 "무언가 진행 중"이라는 말이 아니라, 지금 어디까지 왔고 무엇이 검증됐는지였기 때문이다.

실제 운영 루프는 이랬다

실제 운영 방식은 대략 아래에 가까웠다.

- 나는 텔레그램에서 방향과 우선순위만 정했다.

- OpenClaw는 milestone을 만들고, 필요한 일을 issues로 잘게 쪼갰다.

- issue에는 summary, scope, acceptance criteria, validation 같은 항목을 남기게 했다.

- cron이 2시간마다 돌면서 다음 작업을 계속 진행했다.

- 완료된 이슈는 닫고, 내가 플레이하다가 부족하다고 느끼면 다시 열어 요구사항을 더 구체적으로 적게 했다.

여기서 중요한 건 시켰다는 표현이다. 이 루프에서 나는 거의 디렉터와 QA 역할만 맡았고, 실제 구현은 OpenClaw가 끌고 갔다. 텔레그램 보고도 단순한 성공/실패 수준이 아니었다. 현재 phase, 이번 패스에서 처리한 issue 번호, 막힌 점, 검증 명령, 재배포 여부까지 요약해서 올리게 했다. 내가 확인해야 할 건 코드가 아니라 상태였다.

어디서나 이어진다는 건 진짜였다

가장 좋았던 점은 당연히 어디서나 이어진다는 것이다. 책상 앞에 없어도 개발이 멈추지 않는다. 텔레그램으로 방향만 남겨 두면, 다음 체크포인트가 돌 때 작업이 계속 진행된다.

이 방식은 나를 구현자보다 디렉터와 QA 역할로 밀어 넣었다. SLIME ARENA에서는 실제로 코드 한 줄도 보지 않았다. 나는 Godot를 모르고, 대신 플레이하면서 소감만 전했다. 그런데도 전투 루프, 웨이브, 보스, 레벨업 선택지까지 게임의 뼈대는 꽤 멀리 갔다.

또 하나 좋았던 점은 milestone과 issue를 쓰면서 판단 단위를 강제로 작게 만들 수 있었다는 점이다. 그냥 게임 만들어라고 두는 것보다, 지금 단계에서 무엇을 검증해야 하는지가 훨씬 분명해졌다. 실제로 전투 루프, 웨이브 진행, 보스전, 레벨업 선택지, 밸런스 조정 같은 작업을 각각 따로 굴릴 수 있었다. 이건 나중에 다시 보더라도 히스토리가 남는다는 점에서 단순 채팅 로그보다 훨씬 좋았다.

그래도 문제는 있었다

반대로 단점도 분명했다. 첫 번째는, 어디서나 개발할 수 있다는 점이 그대로 단점이 된다는 것이다. 운동하다 쉬는 시간에도 계속 들여다보게 된다. 열려 있다는 건 편하지만, 동시에 계속 신경 쓰게 만든다.

두 번째는 cron 루프를 안정적으로 돌리는 게 생각보다 쉽지 않았다는 점이다. 프롬프트를 잘못 짜면 다음 단계로 안 가고 진행 상황 보고만 계속하는 경우가 있었다. 결국 무엇을 끝난 것으로 볼지, 다음 단계는 어떻게 고를지, 막혔을 때는 무엇을 fallback으로 삼을지를 꽤 구체적으로 적어 줘야 했다.

세 번째는 검토 부채다. 코드 자체를 안 보고 일이 진행되면 나중에 확인해야 하는 것이 한꺼번에 몰려온다. SLIME ARENA는 내가 코드 한 줄 안 봤기 때문에 더 그랬다. AI를 완전히 믿을 수 있다면 큰 문제는 아닐 수도 있지만, 지금 단계에서는 마지막에 사람이 떠안는 검토량이 꽤 크다.

마지막으로 비용과 사용량 문제도 있다. 나는 이 실험을 하려고 권한을 전부 열어 둔 미니 PC까지 샀다. 그런데 이렇게 굴리면 사용량을 정말 빠르게 먹는다. 실제로 GPT Pro 주간 사용량까지 다 채워 버려서, 원래 더 중요하게 해야 하는 작업을 못 하게 됐다. 그래서 2026년 3월 15일 기준으로는 일단 멈춘 상태다.

다음 실험은 왜 WebGL voxel game인가

SLIME ARENA 이후에는 three.js 기반 WebGL voxel game도 조금 진행했다. 흔히 떠올리는 마인크래프트류 구조를 따라가되, 나는 엔진 쪽과 게임 쪽을 최대한 분리해 두는 걸 중요하게 봤다. 엔진 부분은 다음 프로젝트에도 다시 쓸 수 있기 때문이다.

요즘은 AI 쪽 흐름이 전체적으로 웹 친화적이라고 느낀다. 그래서 앞으로 Web 기반 게임과 Web 게임 엔진 쪽이 더 많이 커질 거라고 보고 있다. AAA 게임이 몰락한다는 뜻은 아니고, 위아래로 양극화가 더 심해질 거라는 쪽에 가깝다. 이 생각은 아직 가설에 가깝지만, 다음 실험을 WebGL로 잡은 이유는 분명히 거기 있었다.

장난감은 아니었다

OpenClaw를 텔레그램에 붙여 굴려 보니, 이건 밖에서도 잠깐 명령 내리는 보조 도구라기보다 원격 개발자 하나를 운영하는 방식에 더 가까웠다. 제대로 굴리려면 milestone, issue, cron, 검증 기준까지 같이 설계해야 한다. 그걸 잡아 두면 생각보다 멀리 간다.

다만 그만큼 관리 포인트도 분명하다. 사용량, 검토 부채, 끊임없이 신경 쓰게 되는 운영 피로까지 같이 감안해야 한다. 지금 내 결론은 단순하다. OpenClaw는 재미있는 장난감이 아니었다. 대신 제어 없이 돌리면 금방 감당 안 되는 쪽으로 커지는 도구였다.