FlyingCat으로 확인한 바이브 코딩의 현재

FlyingCat은 내가 바이브 코딩을 다시 보게 된 첫 프로젝트였다. 이 글에서는 왜 이 게임이 내 기준을 바꿨는지 쪽에 조금 더 집중해 보려고 한다.

지난여름까지만 해도 바이브 코딩은 꽤 실망스러웠다. 같은 요청을 넣어도 결과가 들쭉날쭉했고, 실전에 넣기에는 불안한 구석이 많았다. 그런데 몇 달 사이 체감이 제법 달라졌다. 이제는 질문 몇 번 던져 보는 수준이 아니라, 작은 프로젝트 하나쯤은 통째로 맡겨 보는 실험도 해볼 만하겠다는 생각이 들었다.

왜 FlyingCat이었나

모티브는 예전 플래시 게임 NANACA CRASH, 국내에서는 남친 날리기로도 불리던 게임이었다. 각도와 파워를 잡아 캐릭터를 날리고, 중간 장애물이나 서포터에 부딪히며 거리를 늘리는 구조다. 지인이 이런 류의 게임을 한 번 만들어 보라고 추천했고, 그걸 고양이와 햄스터 분위기로 비틀어 FlyingCat을 잡았다.

이런 게임이 실험 대상으로 괜찮았던 건 겉보기보다 확인할 게 많았기 때문이다. 각도와 파워 조절, 비행 중 상태 전환, 장애물 효과, UI, WebGL 빌드, 랭킹까지 들어가면 작은 게임이어도 손이 갈 곳이 꽤 많다. 버튼 몇 개 있는 앱보다 바이브 코딩의 장단점을 보기 좋은 소재라고 생각했다.

무엇을 어디까지 맡겼나

도구는 Antigravity 하나만 썼고, 모델도 Gemini 3.0 Pro 하나로만 밀었다. 엔진은 Unity였고, UI는 UIToolkit으로 만들었다. 그때는 Gemini가 프론트엔드 성향이 강하다면 USS 기반 UI도 잘하지 않을까라는 기대가 있었는데, 결과적으로는 이 판단이 꽤 맞았다. 이미지 자산은 NanoBanana로 만들었다.

중요했던 건 내가 일부러 코드에서 손을 뗐다는 점이다. 이 실험의 목표는 게임 하나를 빨리 끝내는 것보다 Agentic Coding을 실제로 겪어 보는 것에 더 가까웠다. 원래는 직접 만들다가 중간에 Antigravity를 붙인 건데, 붙인 뒤로는 손이 근질거려도 참고 지켜봤다. 대신 Plan을 먼저 세우고, 특정 라인이나 수정 방향에 코멘트를 남기면서 허가하는 식으로만 개입했다.

대략 3~4일 정도는 AI에게 구현을 맡기고, 그다음 3~4일은 내가 변경된 내용을 보면서 방향을 다시 잡아 주는 식으로 굴렸다. 완전 자동화와는 거리가 있었지만, 내가 코드를 직접 치지 않고도 프로젝트를 앞으로 밀 수 있다는 감각이 꽤 컸다.

실제로 나온 게임

실제 게임 루프는 아래처럼 돌아갔다.

- 각도를 조절한다.

- 파워를 조절한다.

- 햄스터를 날린다.

- 비행 중

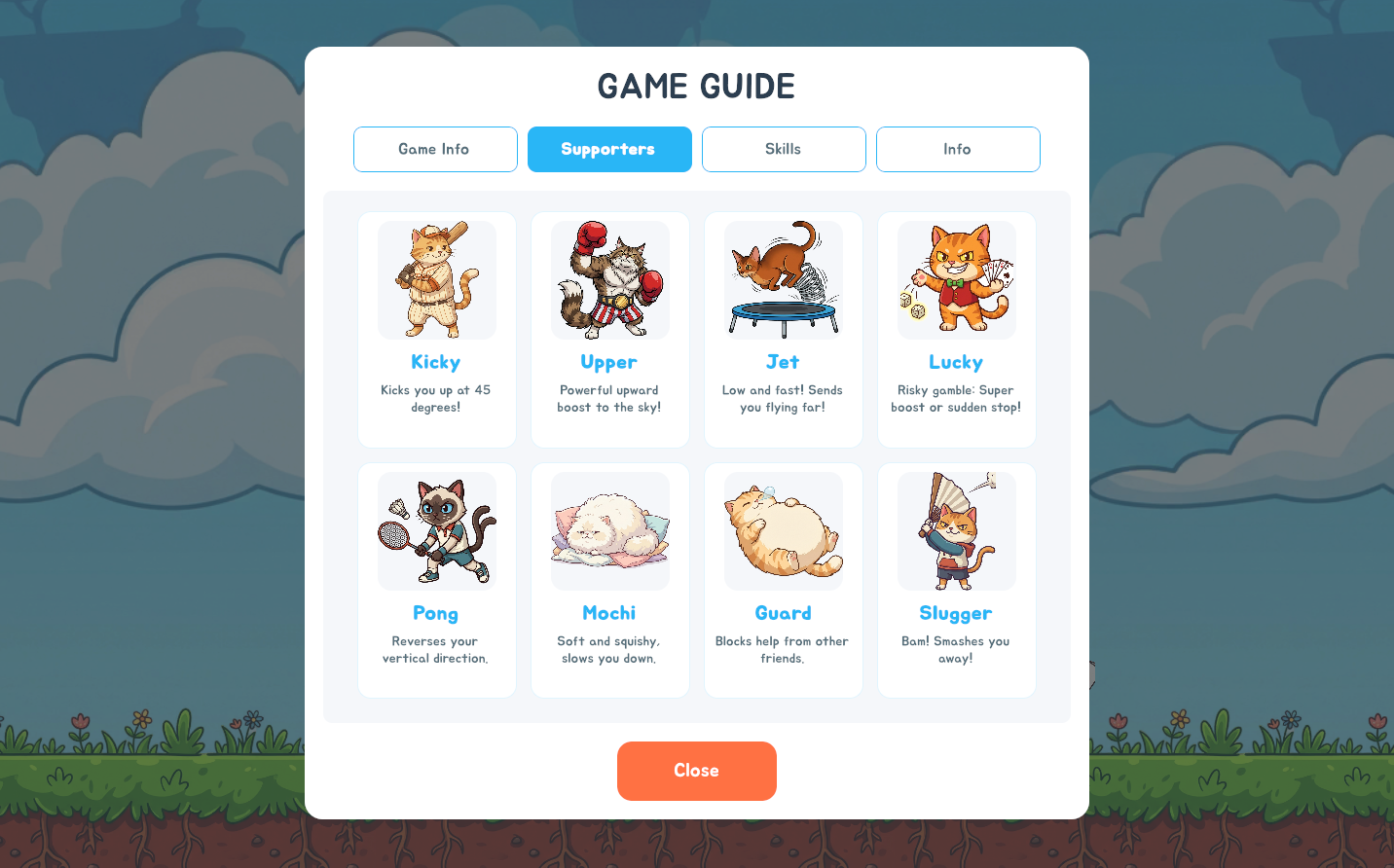

업/다운스킬을 써서 방향을 바꾼다. - 길 중간의 고양이, 장애물, 서포터 효과를 잘 받아 최대한 멀리 간다.

- 완전히 멈추면 게임 오버다.

중간 효과도 제법 다양했다. 45도나 60도 방향으로 다시 날려 버리거나, 수평으로 밀어 주거나, 속도를 깎거나, 몇 초 동안 충돌하지 않게 만드는 식이다. 겉으로는 단순해 보여도 실제 구현에 들어가면 물리와 상태 전환이 계속 맞물리는 구조였다.

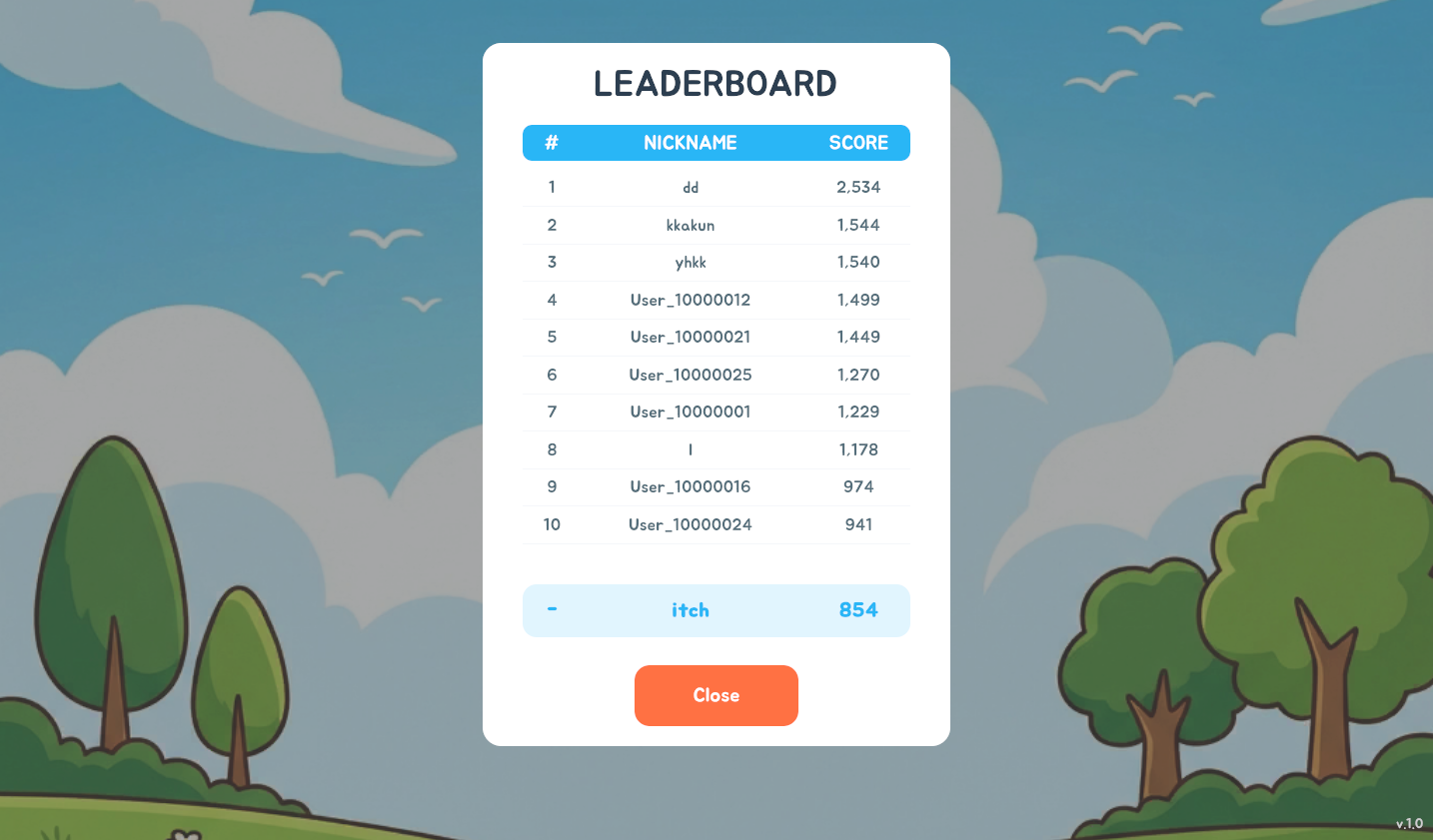

결과물은 실제로 돌아가는 WebGL 게임까지 나왔다. itch.io에 올렸고, 별도 홍보 없이 자연 노출만 둔 상태에서 대략 10명 정도가 플레이했다. 여기서 멈춘 게 아니라 AWS Cognito와 Lambda를 붙여 랭킹 기능도 넣었다. AWS는 자격증 공부로 살짝 본 정도였지 실전 경험은 거의 없었는데, Gemini에게 물어가며 끝까지 붙였다.

생각보다 멀리 갔다

가장 먼저 체감된 건 속도였다. 내가 퇴근 후 지친 상태로 한 달 동안 붙잡았던 것보다, AI가 하루 만에 더 많은 결과를 앞으로 밀어내는 걸 보면서 꽤 놀랐다. 물론 내가 원래 느리게 진행한 것도 사실이지만, 적어도 AI는 피곤하다는 이유로 손을 놓지는 않았다.

UI도 기대 이상이었다. 특히 UIToolkit 쪽이 꽤 인상적이었다. 당시에는 UIToolkit이 인게임 UI에는 그다지 좋지 않다는 평도 있었고, 나도 반신반의하면서 시작했다. 그런데 Antigravity와 Gemini 3.0 Pro 조합은 USS 기반 레이아웃과 화면 구성을 생각보다 잘 밀어붙였다. 나중에 Claude나 Codex로 비슷한 시도를 해 봤을 때 만족도가 덜했던 걸 떠올리면, 그때는 유난히 Gemini가 잘 맞았던 것 같다.

이 지점에서 처음으로 작은 게임 정도는 AI에게 꽤 맡길 수 있겠다는 감각이 생겼다. 단순히 코드 몇 줄 받아 적는 수준이 아니라, 미니게임 하나를 WebGL로 배포하고 랭킹까지 붙이는 단계는 분명히 실전 쪽에 가까웠다.

그래도 결국 사람이 잡았다

그렇다고 편하게 맡겨 두기만 하면 되는 건 아니었다. 환각 때문에 잘못된 코드를 건드리거나, 설명이 틀린 가이드를 주거나, 보안상 좋지 않은 코드를 제안하는 경우가 중간중간 나왔다. 내가 계속 지켜보며 바로잡았기 때문에 굴러간 거지, 그대로 믿고 넘겼으면 꽤 위험했을 것이다.

가장 크게 꼬인 건 물리와 상태 전환이 만나는 지점이었다. FlyingCat은 게임 스텝에 따라 물리가 켜져야 할 때와 꺼져야 할 때가 분명했는데, 이게 1프레임 차이로 잘못 적용되면서 비행 흐름이 어긋나는 문제가 있었다. 처음에는 Unity 기본 물리를 썼지만, 개발을 진행할수록 상태에 따라 일부러 비물리적인 처리가 더 필요해졌고 결국 기본 물리를 버리고 커스텀으로 다시 짰다.

AWS 연동도 비슷했다. 기능 자체를 붙이는 데는 도움이 됐지만, 가이드가 오래됐거나 설명이 너무 축약돼 있어서 실제 콘솔 메뉴를 못 찾는 경우가 종종 있었다. 이런 부분은 AI가 방향을 잡아 주는 데까지만 의미가 있었고, 마지막 확인은 결국 사람이 해야 했다.

FlyingCat 이후에 남은 기준

이 경험 이후로는 바이브 코딩을 더 이상 신기한 장난감으로 보지 않게 됐다. 적어도 미니게임 수준에서는 가능성을 봤고, 이걸 더 큰 프로젝트로 옮겨 볼 수 있겠다는 생각도 생겼다.

동시에 기준도 분명해졌다. AI에게 구현을 맡길 수는 있지만, 디렉팅과 검수는 여전히 사람 몫이다. 특히 상태 전환, 물리, 보안, 외부 서비스 연동처럼 한 번 틀어지면 뒤가 더 힘든 영역은 더 그렇다.

내가 FlyingCat에서 얻은 결론은 단순하다. 바이브 코딩은 이제 장난감 수준은 아니었다. 그렇다고 끝까지 맡기고 손을 떼도 되는 도구도 아니었다. 작은 게임 하나를 실제로 배포해 보고 나서야, 그 두 가지가 동시에 선명하게 보이기 시작했다.